This Week in AI: Ex-OpenAI staff call for safety and transparency

Hiya, folks, and welcome to TechCrunch's inaugural AI newsletter. It's truly a thrill to type those words -- this one's been long in the making, and we're excited to finally share it with you.

With the launch of TC's AI newsletter, we're sunsetting This Week in AI, the semiregular column previously known as Perceptron. But you'll find all the analysis we brought to This Week in AI and more, including a spotlight on noteworthy new AI models, right here.

This week in AI, trouble's brewing -- again -- for OpenAI.

A group of former OpenAI employees spoke with The New York Times' Kevin Roose about what they perceive as egregious safety failings within the organization. They -- like others who've left OpenAI in recent months -- claim that the company isn't doing enough to prevent its AI systems from becoming potentially dangerous and accuse OpenAI of employing hardball tactics to attempt to prevent workers from sounding the alarm.

The group published an open letter on Tuesday calling for leading AI companies, including OpenAI, to establish greater transparency and more protections for whistleblowers. "So long as there is no effective government oversight of these corporations, current and former employees are among the few people who can hold them accountable to the public," the letter reads.

Call me pessimistic, but I expect the ex-staffers' calls will fall on deaf ears. It's tough to imagine a scenario in which AI companies not only agree to "support a culture of open criticism," as the undersigned recommend, but also opt not to enforce nondisparagement clauses or retaliate against current staff who choose to speak out.

Consider that OpenAI's safety commission, which the company recently created in response to initial criticism of its safety practices, is staffed with all company insiders -- including CEO Sam Altman. And consider that Altman, who at one point claimed to have no knowledge of OpenAI's restrictive nondisparagement agreements, himself signed the incorporation documents establishing them.

Sure, things at OpenAI could turn around tomorrow -- but I'm not holding my breath. And even if they did, it'd be tough to trust it.

News

AI apocalypse: OpenAI's AI-powered chatbot platform, ChatGPT -- along with Anthropic's Claude and Google's Gemini and Perplexity -- all went down this morning at roughly the same time. All the services have since been restored, but the cause of their downtime remains unclear.

OpenAI exploring fusion: OpenAI is in talks with fusion startup Helion Energy about a deal in which the AI company would buy vast quantities of electricity from Helion to provide power for its data centers, according to the Wall Street Journal. Altman has a $375 million stake in Helion and sits on the company's board of directors, but he reportedly has recused himself from the deal talks.

The cost of training data: TechCrunch takes a look at the pricey data licensing deals that are becoming commonplace in the AI industry -- deals that threaten to make AI research untenable for smaller organizations and academic institutions.

Hateful music generators: Malicious actors are abusing AI-powered music generators to create homophobic, racist and propagandistic songs -- and publishing guides instructing others how to do so as well.

Cash for Cohere: Reuters reports that Cohere, an enterprise-focused generative AI startup, has raised $450 million from Nvidia, Salesforce Ventures, Cisco and others in a new tranche that values Cohere at $5 billion. Sources familiar tell TechCrunch that Oracle and Thomvest Ventures -- both returning investors -- also participated in the round, which was left open.

Research paper of the week

In a research paper from 2023 titled "Let's Verify Step by Step" that OpenAI recently highlighted on its official blog, scientists at OpenAI claimed to have fine-tuned the startup's general-purpose generative AI model, GPT-4, to achieve better-than-expected performance in solving math problems. The approach could lead to generative models less prone to going off the rails, the co-authors of the paper say -- but they point out several caveats.

In the paper, the co-authors detail how they trained reward models to detect hallucinations, or instances where GPT-4 got its facts and/or answers to math problems wrong. (Reward models are specialized models to evaluate the outputs of AI models, in this case math-related outputs from GPT-4.) The reward models "rewarded" GPT-4 each time it got a step of a math problem right, an approach the researchers refer to as "process supervision."

The researchers say that process supervision improved GPT-4's math problem accuracy compared to previous techniques of "rewarding" models -- at least in their benchmark tests. They admit it's not perfect, however; GPT-4 still got problem steps wrong. And it's unclear how the form of process supervision the researchers explored might generalize beyond the math domain.

Model of the week

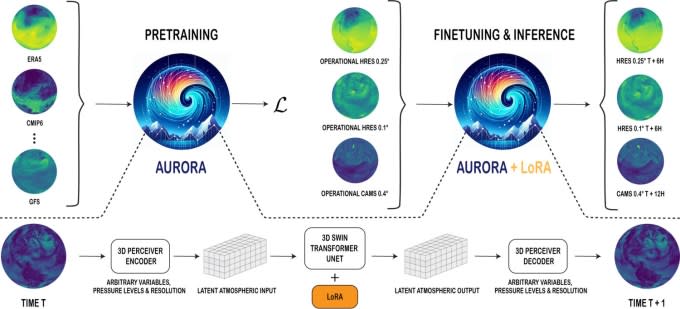

Forecasting the weather may not feel like a science (at least when you get rained on, like I just did), but that's because it's all about probabilities, not certainties. And what better way to calculate probabilities than a probabilistic model? We've already seen AI put to work on weather prediction at time scales from hours to centuries, and now Microsoft is getting in on the fun. The company's new Aurora model moves the ball forward in this fast-evolving corner of the AI world, providing globe-level predictions at ~0.1° resolution (think on the order of 10 km square).

Trained on over a million hours of weather and climate simulations (not real weather? Hmm…) and fine-tuned on a number of desirable tasks, Aurora outperforms traditional numerical prediction systems by several orders of magnitude. More impressively, it beats Google DeepMind's GraphCast at its own game (though Microsoft picked the field), providing more accurate guesses of weather conditions on the one- to five-day scale.

Companies like Google and Microsoft have a horse in the race, of course, both vying for your online attention by trying to offer the most personalized web and search experience. Accurate, efficient first-party weather forecasts are going to be an important part of that, at least until we stop going outside.

Grab bag

In a thought piece last month in Palladium, Avital Balwit, chief of staff at AI startup Anthropic, posits that the next three years might be the last she and many knowledge workers have to work thanks to generative AI's rapid advancements. This should come as a comfort rather than a reason to fear, she says, because it could "[lead to] a world where people have their material needs met but also have no need to work."

"A renowned AI researcher once told me that he is practicing for [this inflection point] by taking up activities that he is not particularly good at: jiu-jitsu, surfing, and so on, and savoring the doing even without excellence," Balwit writes. "This is how we can prepare for our future where we will have to do things from joy rather than need, where we will no longer be the best at them, but will still have to choose how to fill our days."

That's certainly the glass-half-full view -- but one I can't say I share.

Should generative AI replace most knowledge workers within three years (which seems unrealistic to me given AI's many unsolved technical problems), economic collapse could well ensue. Knowledge workers make up large portions of the workforce and tend to be high earners -- and thus big spenders. They drive the wheels of capitalism forward.

Balwit makes references to universal basic income and other large-scale social safety net programs. But I don't have a lot of faith that countries like the U.S., which can't even manage basic federal-level AI legislation, will adopt universal basic income schemes anytime soon.

With any luck, I'm wrong.