ChatGPT Keeps Imploding Because of Crochet. (Seriously.)

Alex Woolner has a perfect name for a textile artist. She’s lived up to it, too, knitting as a hobby since she was 7. More recently, she picked up crochet as a way to make small stuffed animals called amigurumi for her nieces and nephews.

After finishing a stuffed narwhal for one of her nephews last month, she had an idea: What if she used ChatGPT—the AI chatbot that’s taken the internet and tech worlds by storm—to make a crochet pattern? She went online and instructed the chatbot: “Write me a crochet pattern for a narwhal stuffed animal using worsted weight yarn.”

Thus began Woolner’s long descent into the universe of AI-generated monstrosities. She followed ChatGPT’s directions for the object to a T.

The internet affectionately named the resulting narwal—if you can call it one—Gerald.

Almost immediately after she began, Woolner could tell the project was going to go off the rails. Eyeballing the pattern, she noticed it did not tell her to stuff the narwhal’s tail, but instead attach it limp and unstuffed to the main section. The narwhal’s typically blubbery, soda can-shaped body had been compressed into a pellet by the AI-generated pattern, and its protruding tooth looked more like a pouf befitting Marie Antoinette than a tusk. Woolner likened the finished product to an “angler fish chef.”

And once she posted the video to TikTok, the comments didn’t disappoint.

“that is a manatee in witness protection”

“Is that jay leno”

“It’s giving ‘Chris Rock from Fifth Element’”

“Undiscovered pokemon”

“this looks like an angry fish going to classical court in a powdered wig”

Still others professed affection for the creation.

“I fully back the concept of doing this as proof that AI shouldn’t be used to generate art, but also I wuv himb”

“this is absolutely terrifying, but in a really cute way 🥰”

“Not to be dramatic but, that inanimate object that is a crime against your craft is now my best friend.”

Woolner’s video has been viewed over 600,000 times since it was posted on Jan. 11. Since then, she’s also prompted ChatGPT to generate patterns for a cat, a “normal fish,” and a “stuffed birthday crochet.” Based on the unexpected demand, she has also started selling handmade replicas of Gerald and a downloadable pattern for users to make their own not-really-narwhal narwhals.

“I think that right now, AI is kind of frightening, and does things in ways that are a little bit incorrect,” Woolner told The Daily Beast. “But also we find it fascinating and kind of endearing, even in its mistakes. These crochet creatures are arguably very ugly and very wrong, but also cute.”

The last several weeks have unleashed a deluge of stories over what ChatGPT can sort of do: write academic essays, take medical exams, meal prep, and perhaps (one day) act as a courtroom lawyer. But for every success story, it seems there’s another highlighting its failure: Why can ChatGPT write a five-paragraph essay but not a good one? Why does it struggle with some sorts of logic questions and confidently declare incorrect answers to others?

Believe it or not, Woolner’s exercise in AI-directed crochet stands out among the tool’s most glaring failures—because while ChatGPT and similar AI tools are trained to be exceedingly good at predicting the next word in a sentence, they have an Achilles’ heel that most people, enamored by the allure of artificial intelligence, miss completely. And it turns out that the best way to illustrate this problem is a centuries-old textile-making process.

Sense and Sense-ability

I used to compete in Science Olympiad in high school (nerd alert!), and my team was always stumped by a nightmarish event called Write It Do It. A 3D object—made from Lego bricks, pipe cleaners, stickers, index cards, paperclips, and all manner of school detritus—is presented to one participant who has to reverse-engineer a set of directions for how to construct it. Another participant, who hasn’t seen the object, has to use the instructions and raw materials to build it.

It’s tough to explain this in words—though that’s the point!—so imagine writing down the instructions to build a snowman. You wouldn’t be able to just say “build a snowman.” Instead, you might write down: “Roll snowballs to form the body, then add two eyes, a carrot nose, and a mouth.” More complex objects create bigger hurdles, but a snowman seems simple enough, right?

When Lily Lanario asked ChatGPT to make her a crochet pattern for a snowman, though, something completely unexpected happened. As she followed the pattern, she realized she was crocheting a sphere that tapered off at the top, then grew into a second sphere—and then just kept going. The pattern, she told The Daily Beast, called for her to continuously crochet snowball after snowball. After crafting a handful, she gave up rather than waste the yarn, as it seemed like she would be making infinite snowballs otherwise.

A Chatbot Could Never Write This Article. Here’s Why.

ChatGPT may be able to create text that reads sensibly and coherently. But since conversation is its priority, there is an entire “language” that ChatGPT is simply not very good at: math. Logical reasoning and numerical thinking require a different skill set than interpreting and writing text.

And crochet has arguably more in common with math than any other art form. Like mathematics, knitting and crochet patterns are not written in full words that an AI can interpret, but rather a coded shorthand. One- and two-letter abbreviations stand for different stitch types, and without a key, the overall result looks like a string of gibberish.

“Anyone who reads a knitting pattern immediately is reading a code at a pretty sophisticated level,” Margaret Wertheim, a science writer and artist, told The Daily Beast. Wertheim and her twin sister run The Institute For Figuring—an organization at the intersection of aesthetics, science, and math—which is perhaps best known for a vast project of crocheted coral reefs.

Wertheim believes that AI like ChatGPT falters when designing crochet patterns because its outputs lack two factors she calls sense and sense-ability (no, that’s not a misspelling).

Sense describes the bot’s ability to produce a coherent pattern. Generally, ChatGPT creates instructions that can be followed but require some revision to prevent nonsensical errors, like when it asked Woolner to crochet one fin for Gerald then attach the fin “on either side of the body.” (She wound up making two fins.)

Sense-ability, on the other hand, is the knowledge that the pattern is meant to create a physical object. ChatGPT, however, creates instructions without knowing what the abbreviations stand for; it also has no concept of how the instructions will be used.

Sense (the pattern on the page) and sense-ability (the narwhal in your hands) might sound like fresh terms, but you’ve seen and used them when you learned basic algebra. Recall that the equation for a straight line is y = mx + b. Much like a crochet pattern, these symbols on a page (sense) make sense on their own and tell you what a final product will look like—in this case, a two-dimensional line (sense-ability). (Though it is vastly more difficult, if not impossible, to visualize the end product from looking at a crochet pattern’s written instructions.)

OpenAI’s Impressive New Chatbot Isn’t Immune to Racism

Without sense and sense-ability, ChatGPT and its ilk can’t comprehend crochet. “I think it's going to be a long time before the AIs are going to be capable of producing something that is actually a useful, interesting crochet pattern because they just don't have a sense of the thing ultimately being an object in physical space,” said Wertheim.

However, Daina Taimina, an emerita mathematics researcher at Cornell University, has another theory about why Lanario, Woolner, and others keep creating AI-generated abominations: Their instructions for the bot just aren’t that good. If you wanted to renovate your apartment, she said, you wouldn’t tell a contractor, “Renovate my apartment.”

Instead, you’d give them all the specifics—how to tile the bathroom, what room dimensions you’d like to see, and what color to paint the walls. In fact, specificity is a general pro tip for ChatGPT users, regardless of the end product.

I asked Woolner if she wouldn’t mind recreating Gerald the narwhal for the sake of this theory. Rather than asking the bot to make a crochet pattern for a narwhal, she gave it specific dimensions, colors, and even the brand of yarn that she wanted.

Two weeks later, she followed up. “This is possibly the most frustrating one I have ever tried to complete,” she wrote in an email. ChatGPT’s instructions, formerly in crocheting shorthand, now described each stitch individually, making it difficult to keep one’s place in the pattern. Worse, the pattern stopped making sense—rows would call for more stitches than existed in the piece, and entire regions like the body and tail were never finished.

After all that effort, Woolner’s second narwhal came out even less narwhal-looking than the first. Its body was a massive stuffed triangle, and its tusk looked like a gumdrop at one end.

Gerald II did not turn out well.

“So it has been a TASK…Still kind of a fascinating experiment,” she concluded.

Finishing the HAT3000

It’s clear that ChatGPT can’t crack crochet, and its ineptitude is more than an isolated quirk. How do we know that? Its predecessor struggled with the same issue, though it took a very different (incorrect) path to reach the same destination.

In 2019, a bot called HAT3000 that had been trained to create new patterns for crocheted hats came up with one called “Hang in Wind”: “Toss the pieces together. This is the windmill: Windmill in Wind: With a 1 cc ball of yarn, ch 14, join. Do not join, twist yarn to form a knot, or cut yarn.” Those who know how to crochet and complete amateurs alike would find this pattern enigmatic, least of all because it is not a hat. A user on a popular crochet forum constructed “Hang in Wind,” which charitably looks like a friendship bracelet for a mouse with two long ends. Realistically, it looks like a mistake.

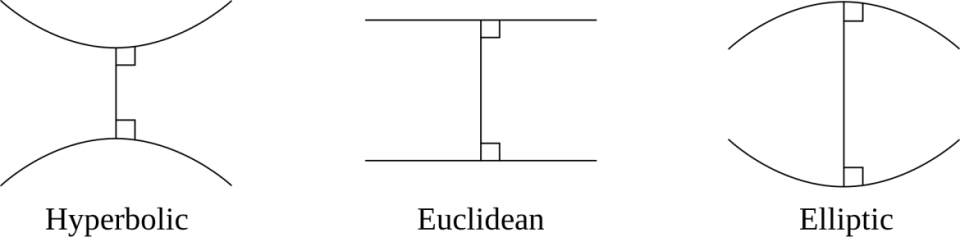

Non-Euclidean geometry refers to the types of shapes depicted on the left and right. Hyperbolic planes create a “negative” space as they move apart.

HAT3000, developed by AI experimenter and writer Janelle Shane, was made using a predecessor for the model that powers ChatGPT. Its training data were limited to several hundred patterns for crochet hats. It seemed like HAT3000 had been set up for success. It did not pan out that way. Hang in Wind, while bizarre, was actually something of an exception. “My first indication that something was going wrong,” Shane wrote in a September 2019 blog post, “was when the hats kept exploding into hyperbolic super-surfaces.”

Her observation requires a bit of context. Decades ago, Taimina discovered a shocking way in which crochet could aid students of non-Euclidean geometry, a branch of mathematics that’s difficult to visualize in two and three dimensions. In 1997, years before 3D-printing or virtual reality went mainstream, she saw crochet as an under-appreciated alternative to standard tactile model-making and figured out a way to crochet a hyperbolic plane—a model of non-Euclidean geometry that possesses a negative curvature, unlike a flat surface (no curvature) or an orb (positive curvature).

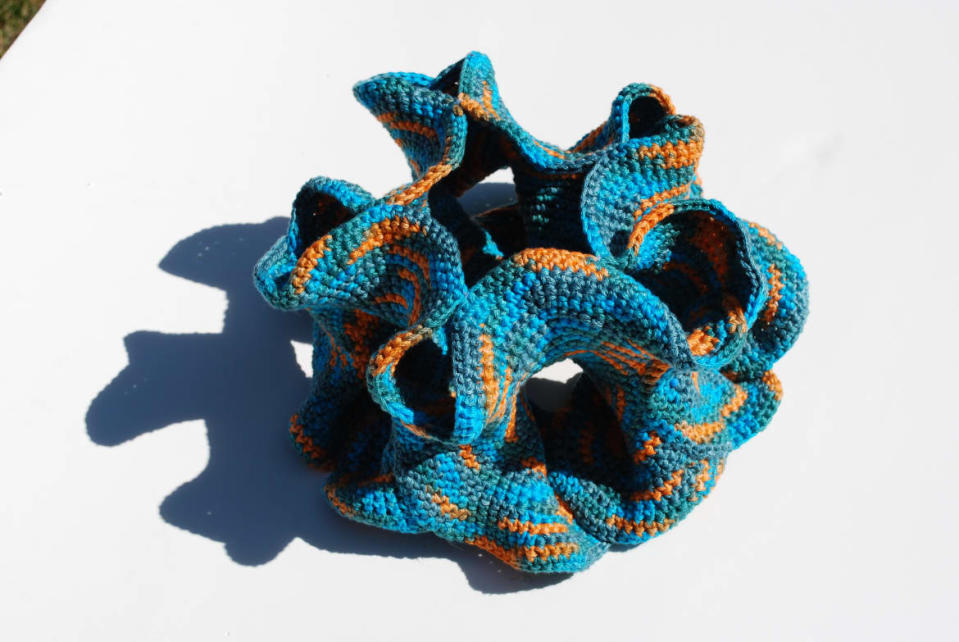

A hyperbolic plane in crochet form.

Though creating one had baffled mathematicians for centuries, the basic hyperbolic plane is astoundingly simple to crochet, according to a paper Taimina wrote in 2001. Starting with about 20 chain stitches, you crochet a row and increase by one stitch at the end, and continue ad nauseam. As long as you increase the number of stitches at a constant rate, you will create a hyperbolic plane—the higher the rate, the quicker your model will start to ruffle.

It turned out HAT3000 had generated variation upon variation of Taimina’s pattern, without having ever been trained on it. When real crocheters tried to construct objects from these patterns, their creations ruffled and curled.

“Almost all of HAT3000’s patterns did this, eating yarn, eating sanity, becoming more and more hyperbolic, threatening to collapse into black holes,” Shane wrote. Initially baffled, she eventually concluded that this kept happening because HAT3000 trained on hat patterns that got bigger with each row. If the AI had understood what it was doing and had been able to control the rate at which it increased, its hats may have made more sense. Instead, HAT3000 created patterns lacking sense-ability without realizing anything was wrong.

ChatGPT seems to have shaken off the unintentional obsession with hyperbolic planes—a clear improvement from its predecessor. Shane told The Daily Beast in an email that the differences between her model and ChatGPT provide evidence that the latter has a much better memory. It also seems capable of piecing together conserved facets of most stuffed animal patterns—that they include eyes and legs, that most parts are round, and that they stick together. “I'm impressed that it remembers what animals have what parts, even if the parts aren't shaped correctly,” Shane said.

But in many ways, the chatbot’s pitfalls are just as profound as HAT3000’s. Despite our best guesses as to what could be going wrong, there’s no easy way to solve these problems.

No Man Is an A-Island

Warts and all, ChatGPT isn’t going away—though not everyone considers its output so flawed. When asked why it’s so difficult to train AI to generate crochet patterns, Shane replied, “What do you mean? I think ChatGPT's shrimp is beautiful.”

Moreover, “I think you could 100 percent get a decent pattern out of it,” Lanario said, although she added that some interpretation may be necessary in later stages. She recently crocheted a ChatGPT-generated pattern for a duck that looks like a rubber duck, especially when she embroidered the beak and added eyes (which, to be fair, the AI pattern did not include.) Woolner added that the technology could be used as a shortcut to make crochet pattern designers’ work more efficient—an idea that may extend to the art world generally.

If it looks like a duck and quacks like a duck…

But for the moment, artists’ jobs are not at risk: AI simply isn’t good enough at what human creators can do. Even accounting for the uncanny valley not-quite-rightness of the final products, ChatGPT-generated stuffed animal patterns have a “generic” look to them, Shane said. After the excitement of the undertaking wore off, Lanario started to get bored by the AI’s reliance on a single, basic stitch in all of its patterns. The patterns also tend to construct spheres in a way that an experienced crocheter would find sophomoric and lumpy.

Grifters could take advantage of how easy it is to generate bad patterns with AI, and their victims wouldn’t realize they’d been duped until they had already purchased and downloaded a second-rate pattern. At the same time, more scrupulous crocheters could charge a premium for cleaning up and simplifying the AI’s handiwork.

The release of ChatGPT to the public late last year had stoked the flames of a debate over whether AI will alternately augment or put an end to creativity. Both sides of this argument typically take for granted that the eventual goal of artificial intelligence research is to optimize an AI to produce the best, most accurate and relevant answers. But what if AI’s “happy accidents” play a role in spurring creativity, too? It’s unlikely we would be talking about HAT3000 or TikTokers crocheting ChatGPT-generated patterns if either project’s results had been predictable. If we fine-tune AI models to make crochet patterns, we’ll necessarily shed the unexpected snafus that have been characteristic of them so far.

The Strange, Nervous Rise of the Therapist Chatbot

“Alas, yes, as crochet generators get better we lose exploding hats,” Shane said. But there’s still hope for more chaos down the road. “[W]e do gain round shrimp and round narwhal. I am delighted by them. I hope there is another hilarious stage beyond this, before we get to ‘Yup, looks like a shrimp.’”

Because if tomorrow’s AI can’t create Gerald or “Hang in Wind,” perhaps we’re all the worse for it.

Got a tip? Send it to The Daily Beast here

Get the Daily Beast's biggest scoops and scandals delivered right to your inbox. Sign up now.

Stay informed and gain unlimited access to the Daily Beast's unmatched reporting. Subscribe now.